A Conversation With AI Engineer Jagadish Mahendran Whose Invention ‘Sees’ for Visually Impaired People

- Using technology developed by Intel, Mahendran has created a voice-activated AI backpack that guides people with visual impairments in outdoor environments.

When AI perception engineer Jagadish Mahendran heard about his friend Breean Cox’s daily challenges navigating the world as a blind person, he immediately thought of his own work in artificial intelligence. “When I met my visually impaired friend, Breean, I was struck by the irony that while I have been teaching robots to see, there are many people who cannot see and need help,” said Mahendran.

Finding it ironic that he had helped develop machines — including a shopping robot that could “see” stocked shelves and a kitchen robot — but nothing for people with no vision, Mahendran got busy. “This fueled my motivation to build the visual assistance system as soon as possible,” says the man on a mission – to help visually impaired people see. “There are so many people who cannot see and need a lot of help.”

Using technology developed by Intel, Mahendran has created a voice-activated AI backpack that guides people with visual impairments in outdoor environments — and the system costs just a mere $800, compared to the possible thousands on may have to shell out for similar technology.

Mahendran spoke with American Kahani from his home in Canada (Mahendran works for a Robotics team that is located there) to talk about his path-breaking assistive technology and his hopes for the future.

The World Health Organization estimates that globally 285 million people are visually impaired meaning they have vision problems that can’t be corrected with glasses. Of that group, 39 million are blind. For blind people, navigating an outdoor environment can be both difficult and potentially hazardous.

Meanwhile, visual assistance systems for navigation are fairly limited and range from Global Positioning System-based, voice-assisted smartphone apps to camera-enabled smart walking stick solutions and guide dogs. But none are the perfect solution. Guide dogs can be expensive (and some people are allergic).

Assistive Technology

White canes, meanwhile, won’t help avoid overhead hazards, such as hanging tree branches. The other assistive technology devices aren’t always ideal. Voice-assisted smartphone apps can give visually impaired people turn-by-turn directions, but they can’t help them avoid obstacles. Smart glasses usually cost thousands of dollars, while smart canes require a person to dedicate one hand to the tech — not great if they are, say, trying to carry groceries home from the store.

And while these systems lack the depth perception necessary to facilitate independent navigation, Bangalore-native Mahendran’s assistive technology may just be the answer.

The idea for this technology however, struck Mahendran as early as 2013, when he started his Master’s in AI at the University of Georgia. “Back then I did not make much progress, due to various reasons – ‘deep learning’ was not mainstream in computer vision and I was new to the field. The hardware was also very different back then.”

But today with the fast-paced growing AI community and companies like Intel, “we are transforming this AI space so quickly and efficiently and are able to do things we couldn’t do a few years ago.” But it wasn’t till he met his “real inspiration” Breean Cox, that Mahendran realized his vision.

To aid his work, Mahendran created a team of like minded volunteers, who want to make a positive impact on the community. Named MIRA, the volunteers hail from various walks of life – mechanical designing, product designing, marketing to community service, arts and law, including those from the visually impaired community. “Our team mission is to provide an open-source AI-based visual assistance system for free and increase the involvement of visually impaired people in their daily activities and we know this AI space really well.” Mahendran and his collaborators interviewed several people with visual impairments to ensure the device would address the challenges they faced. The result – a cost-effective AI backpack.

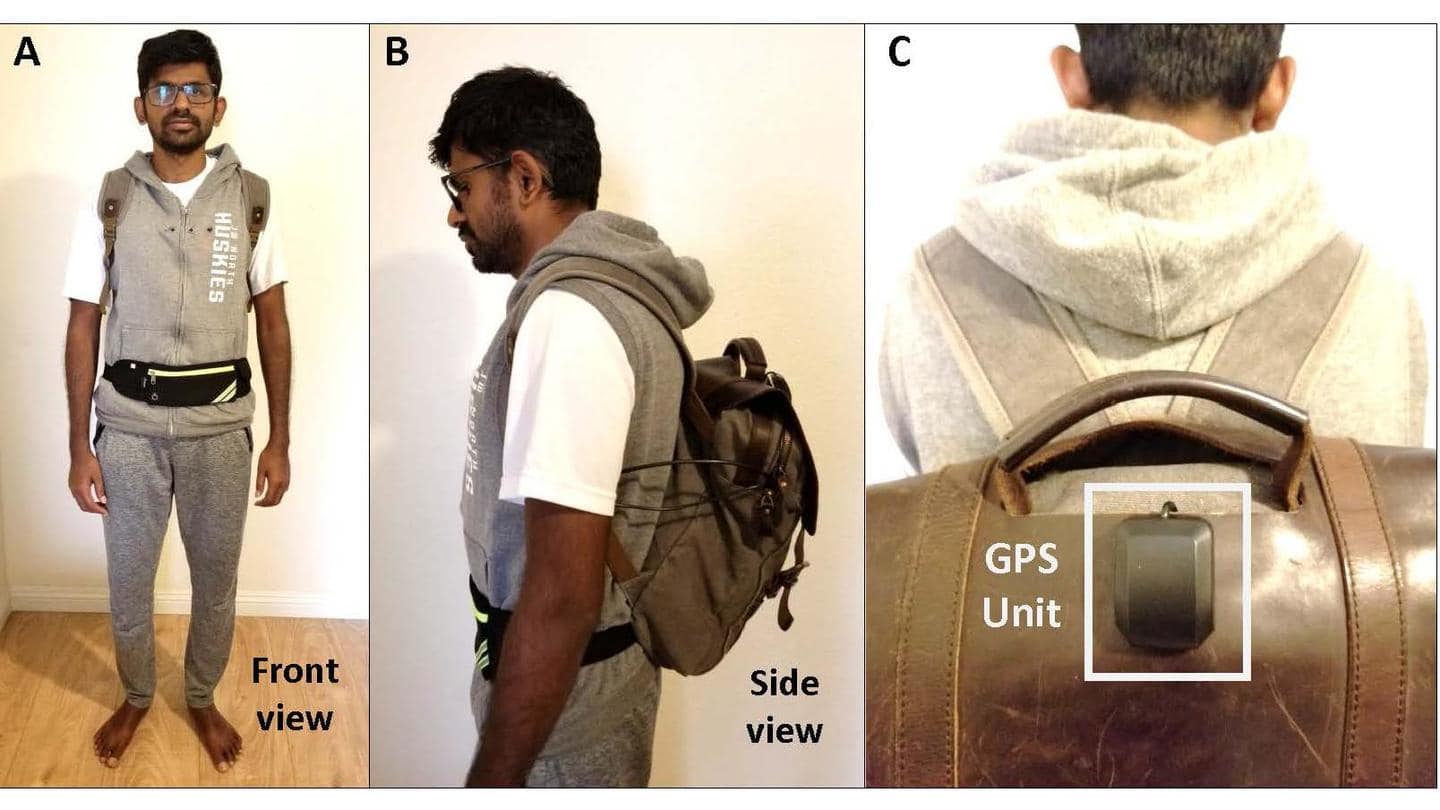

Describing the yet unnamed system, which has a battery life of eight hours as “simple, wearable, and unobtrusive,” Mahendran adds, “One of the problems visually impaired people face is that they gain a lot of attention when walking in the street. Our focus is to reduce this and to allow the user to mingle with the crowd and not stand out.”

Mahendran and his team, learn this and more from focus groups and interviews they conducted with visually impaired peoples. Armed with these insights, they developed a system consisting of a small backpack, a vest, a Bluetooth earpiece and a fanny pack.Currently, the vest includes hidden Intel sensors and a front-facing camera, while the backpack and fanny pack house a small computing unit and power source. The Luxonis OAK-D unit, powered by Intel technology, located in the vest and fanny pack, is an AI device that processes camera data almost instantly to interpret the world around the user.

The OAK-D unit is a versatile and powerful AI device that runs on Intel Movidius VPU. It is capable of running advanced neural networks while providing accelerated computer vision functions and a real-time depth map from its stereo pair, as well as color information from a single 4k camera.

A Bluetooth-enabled earphone lets the user interact with the system via voice queries and commands, and the system responds with verbal information. People interact with it through voice commands, such as “start” which activates the system. “Describe” collects information about objects within camera view, which prompts the AI to list nearby objects along with their clock positions – “for example, person at 10 clock,” while “locate” pulls up saved locations from the GPS system, like an office, home address, grocery store or even “their favorite ice-cream shop” by their custom names. “In instances where the user feels lost, they can request the system with the key word “locate” and the system will respond with information as to the nearby safe location. For example, if the user is a 100 feet away from their favorite ice cream shop, the system will say ‘ice cream shop 100 feet’. This will provide a reference for the user.”

Keeping it Simple

“The system can also allow the user to send location information (GPS coordinates) and even pictures of where they are to preferred contacts.” Mahendran said he wanted to “keep communication simple,” so the user wouldn’t be overwhelmed with a constant barrage of audio about the surroundings. Instead the machine reads short prompts. “They can hear the AI’s automatic warnings about potential dangers — to let them know a hanging branch is straight ahead, for example, the AI will say ‘top, front.’ As the user moves through their environment, the system audibly conveys information about common obstacles including signs, tree branches and pedestrians. “The backpack will allow people who are blind to walk freely and safely,” says Mahendran.

Mahendran’s prototype won the $3,000 grand prize at the OpenCV Spatial AI competition in 2020 that was sponsored by Intel. Mahendran, however, tells American Kahani that his team and he have come up with a simpler version of the current prototype and it will soon be heading to the testing phase. Mahendran says that the initial AI backpack prototype costs about $800 — that’s already thousands less than most smart glasses, but he and his team are working to get the cost down even further. “We wish to create the best possible solution, at the least cost to the user,” says the socially-minded Mahendran. “The unemployment rate amongst the visually impaired community is extremely high – more than 60 percent. With this in mind, the focus was to keep the cost as low as possible.”

He’s planning on keeping the collaborative energy going and will make everything they develop for the project — the code, datasets, etc. — open source to other researchers. Mahendran has also submitted a research paper on the project that’s awaiting review. Although Mahendran’s AI backpack isn’t for sale, quite yet, Mahendran is going to start a GoFundMe to help equip any blind pedestrians who want to use the system. Right now, they’re raising funds for testing and looking for more volunteer developers to help them reach their ultimate goal of providing visually impaired people with an open-source, AI-based assistance system for free. “The user will be expected to buy the hardware on their own directly from the seller, so there is no intention of making any profit from the hardware and the AI software – the core component – we hope to provide free!”

Social Service

Mahendran is no stranger to social service. While completing his bachelor’s degree, Mahendran heard about social activist Narayan Krishnan, a Taj Hotel chef, who seeing hunger and poverty around him transformed his life. He left his cushy well-paying job and devoted himself to social work, distributing food to those in need. Inspired by him, Mahendran started a team and collected money to donate to his cause. Mahendran joined United for Cause, a group started by a senior at his college, and through them taught impoverished kids “practical things – like using Excel, how to explain science in a simpler way and train them to get a minimum wage job.”

Unfortunately, the founders went their separate ways after a year, but Mahendran still hopes to one day start it back up. “We didn’t achieve anything significant, but tried to do something for the community and in our small way made a difference.” As to when his romance with AI started, Mahendran, going back to his undergraduate days in India says, “While completing my bachelor’s degree in India from PSIT, Bangalore in Information Sciences, we developed a micro-mouse robot, as an extracurricular activity. I enjoyed building the robot. After that I took many interesting electives, and it is through those that I became enamored by AI. Each course helped me get my foot in the door with AI and ignited my curiosity.”

Finding AI “fascinating”, Mahendran headed to The University of Georgia, Institute for Artificial Intelligence for a Masters but not before he spent two years working for Sling Media, now Dish Network. A dutiful son, Mahendran credits his success to his mother, a homemaker, who although not highly educated, seeing the value in education, encouraged his older brother and him, to reach for the sky. “I don’t have enough words to express my gratitude to her. My mother is my biggest inspiration and whatever I am today is because of her,” says a grateful Mahendran.

Anu Ghosh immigrated to the U.S. from India in 1999. Back in India she was a journalist for the Times of India in Pune for 8years and a graduate from the Symbiosis Institute of Journalism and Communication. In the U.S., she obtained her Masters andPhD. in Communications from The Ohio State University. Go Buckeyes! She has been involved in education for the last 15years, as a professor at Oglethorpe University and then Georgia State University. She currently teaches Special Education at OakGrove Elementary. She is also a mom to two precocious girls ages 11 and 6.