The Private Sovereignty of Platforms: In the Age of Social Media, Power Without Accountability is No Longer Acceptable

- The goal is not to turn platforms into public utilities or governments. It is to recognize that when private infrastructure becomes the default arena for public life, certain obligations attach: not content neutrality, but procedural legitimacy.

The email arrived without warning. In March 2026, a veteran journalist who had spent years building an audience on Instagram and Threads woke to find both accounts erased. No specific violation cited. No archive preserved. No human reviewer named.

The automated notice referenced “community standards” on child safety—a category that, in recent years, has ensnared countless innocent posts: family photos, reporting on policy debates, even stock images. Meta later admitted similar errors in other cases, but the damage was done. Years of sources, threads, and public record—gone.

An appeal window existed on paper. It led nowhere.

This is no longer an edge case. It is the ordinary machinery of private power in 2026.

Social media has completed its transformation. What began as frivolous digital town squares—cat videos, status updates, fleeting arguments—now functions as the central nervous system of public life. News breaks here first. Reputations rise and fall in hours. Movements coalesce or collapse under algorithmic gravity. Civic debate, economic opportunity, emotional connection: all route through a handful of privately owned platforms whose reach dwarfs any historical precedent.

Yet their governance remains a black box. The architects are not cartoon villains. Many engineers who scaled today’s moderation systems—now heavily augmented by AI—believed they were building guardrails against spam, harassment, and manipulation. The problem lies deeper: power without accountability. Scale has outrun norms, just as it did with railroads in the Gilded Age, banks before the New Deal, and tech monopolies in the early internet era. Each time, private infrastructure that became essential to public life eventually collided with the demand for legitimacy. The difference now is the domain. This is not merely commerce. It is the architecture of discourse itself.

Platforms are not governments, yet they govern speech, visibility, and association at planetary scale. They are not public utilities, yet they host the functional equivalent of the town square. They claim the First Amendment rights of private editors while exercising authority once reserved for states.

Call it private sovereignty: entities that are legally private but functionally public, unbound by the procedural norms that constrain legitimate authority.

Recent experiments have only sharpened the point. Meta’s 2025 pivot—replacing third-party fact-checking with Community Notes, narrowing enforcement to “illegal and high-severity” violations, and leaning on users—promised less heavy-handed moderation. X has doubled down on AI-driven feeds and crowd-sourced notes.

Both moves responded to criticism of overreach. Yet the core complaint persists: when accounts vanish, the reasons remain opaque, the appeals perfunctory, the error rates hidden. DSA transparency reports released in early 2026 confirm the pattern: platforms actioned millions of pieces of content in the second half of 2025; appeals constituted well under 1 percent. Volume is no longer an excuse. The technology that flags content at scale can also log decisions, surface explanations, and route appeals to human review.

Opacity is not a technical inevitability. It is a policy choice that shields inconsistent enforcement, political pressure, and commercial incentives from scrutiny.

What makes this moment distinct—and more urgent—is the arrival of generative AI as the primary moderator. Machine-learning systems now make the overwhelming majority of initial decisions. They are faster, cheaper, and, in many cases, more consistent than human teams. But they are also inscrutable. A “violation” notice that once came from a distant moderator now emerges from probabilistic models trained on opaque datasets. Even when platforms offer explanations, they are often generic templates that reveal nothing about the underlying logic.

What makes this moment distinct—and more urgent—is the arrival of generative AI as the primary moderator. Machine-learning systems now make the overwhelming majority of initial decisions. They are faster, cheaper, and, in many cases, more consistent than human teams.

This is not progress. It is the illusion of neutrality layered atop the same old arbitrariness. The same EU Digital Services Act that fined X €120 million in late 2025 for failures in advertising transparency and researcher access also underscores what is newly possible: standardized “statements of reasons,” auditable logs, and contestable processes. The United States has seen parallel pressure—FTC inquiries into censorship, court rulings holding platforms liable for addictive design, and a 2026 settlement limiting government jawboning. The lesson is consistent: neither pure laissez-faire nor command-and-control works. What is missing is a baseline architecture of legitimacy.

In every other sphere where private power shapes public outcomes, we long ago established minimum procedural guardrails. Employers must give cause for termination. Banks cannot freeze accounts on whim. Courts demand notice and an opportunity to be heard.

We tolerated looser standards on social media because the stakes once felt trivial. They no longer are. Network effects still concentrate users, but the post-2024 landscape shows cracks: Threads, Bluesky, decentralized protocols, and even newsletter ecosystems are eroding absolute monopoly. Exit is becoming marginally less costly. That very competition creates space for platforms to differentiate on trust rather than merely on engagement.

Legitimacy today requires more than good intentions. It demands:

• A clear, specific statement of the rule violat

• An explanation that is intelligible, not boilerplate, including (for AI decisions) the key factors and confidence score.

• A meaningful, time-bound appeal with human oversight for contested cases.

• A public, anonymized record of decisions that permits independent scrutiny while protecting privacy.

• Explicit safeguards against coordinated abuse—such as bot-driven mass reporting—that currently weaponize the system against journalists and dissidents.

These are not utopian. They are the procedural minimums the EU is already forcing on very large platforms and that U.S. courts increasingly recognize as compatible with editorial discretion. The objection that due process would “slow everything down” or be gamed by bad actors has been tested before—in finance, in labor law, in every regulated industry. The result has been more resilient systems, not paralyzed ones. With modern AI, real-time flagging can coexist with post-hoc review; appeals can be parallelized; bad-faith actors can be rate-limited or flagged by the same models that moderate content.

Platforms will not adopt these norms through goodwill alone. External pressure—regulation that sets floors rather than dictating speech rules, industry standards, user-rights litigation, and competitive incentives—will be required. The goal is not to turn platforms into public utilities or governments. It is to recognize that when private infrastructure becomes the default arena for public life, certain obligations attach: not content neutrality, but procedural legitimacy.

History is instructive. Institutions rarely volunteer for accountability until compelled. Once they accept it, they endure. Railroads survived common-carrier rules. Banks survived deposit insurance and disclosure mandates. The digital public square can survive—indeed, thrive under—basic due process.

What is at stake is no longer abstract. In an age of AI amplification, private sovereignty without answerability does not merely annoy. It distorts the public sphere, chills legitimate journalism, entrenches unaccountable gatekeepers, and invites heavier-handed state intervention from every direction. A public square governed as a private gate is inherently unstable. It breeds cynicism, conspiracy, and withdrawal—the very poisons that weaken democratic culture.

The minimum is now the necessary. Transparency is not a slogan; it is the condition of legitimacy. Due process is not a concession to critics; it is the foundation of any authority that claims to serve the public. Accountability is not a threat to innovation; it is what separates durable platforms from brittle monopolies.

A gate that can close without explanation is not governance. It is power without answer. And in the infrastructure of modern public life, that is no longer acceptable.

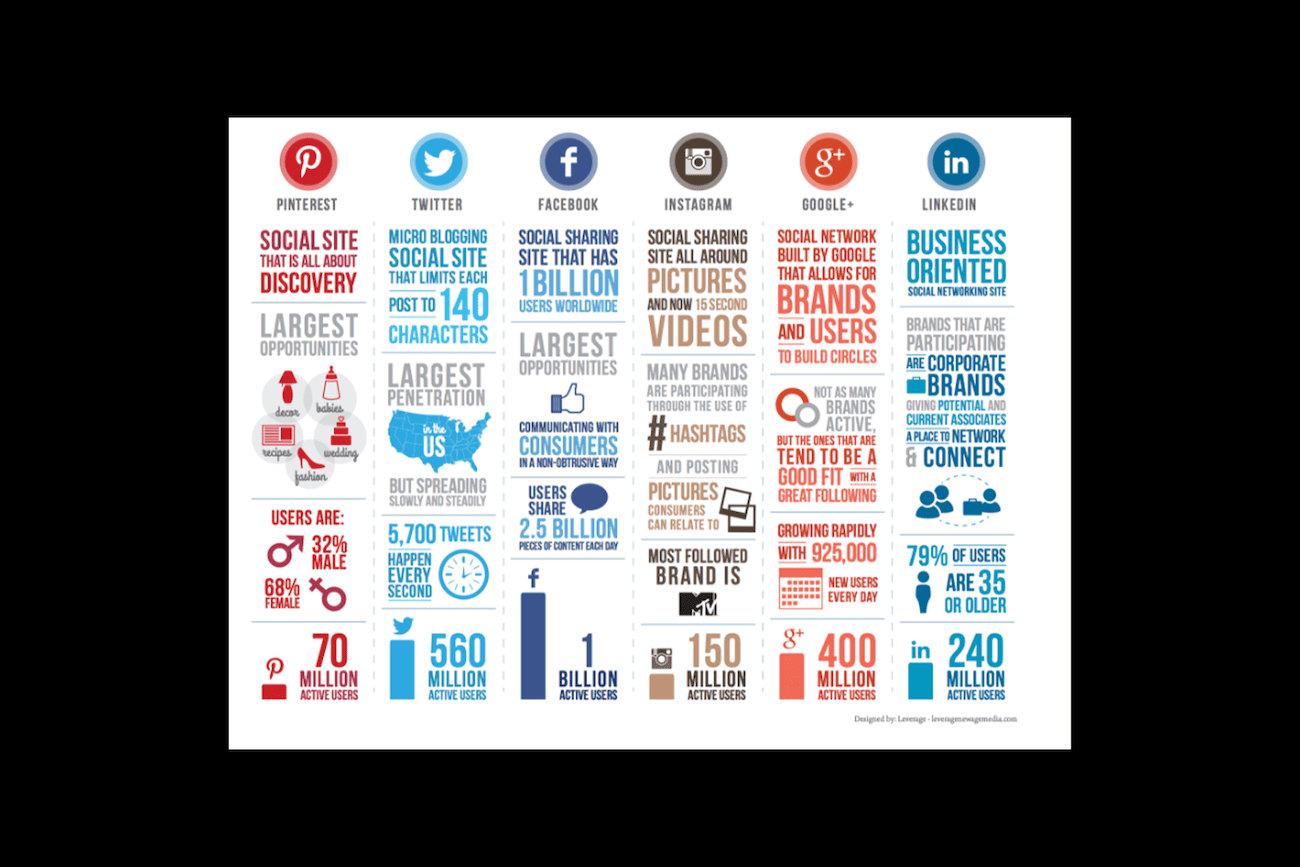

Top imaage: courtesy of https://www.leveragestl.com/social-media-infographic/

Satish Jha co-founded India’s national Hindi daily Jansatta for the Indian Express Group and was Editor of the national newsweekly Dinamaan of The Times of India Group. He has held CXO roles in Fortune 100 companies in Switzerland and the United States and has been an early-stage investor in around 50 U.S. startups. He led One Laptop per Child (OLPC) in India and currently serves on the board of the Vidyabharati Foundation of America, which supports over 14,000 schools educating 3.5 million students across India. He also chairs Ashraya, which supports about 27,000 students through its One Tablet per Child initiative.